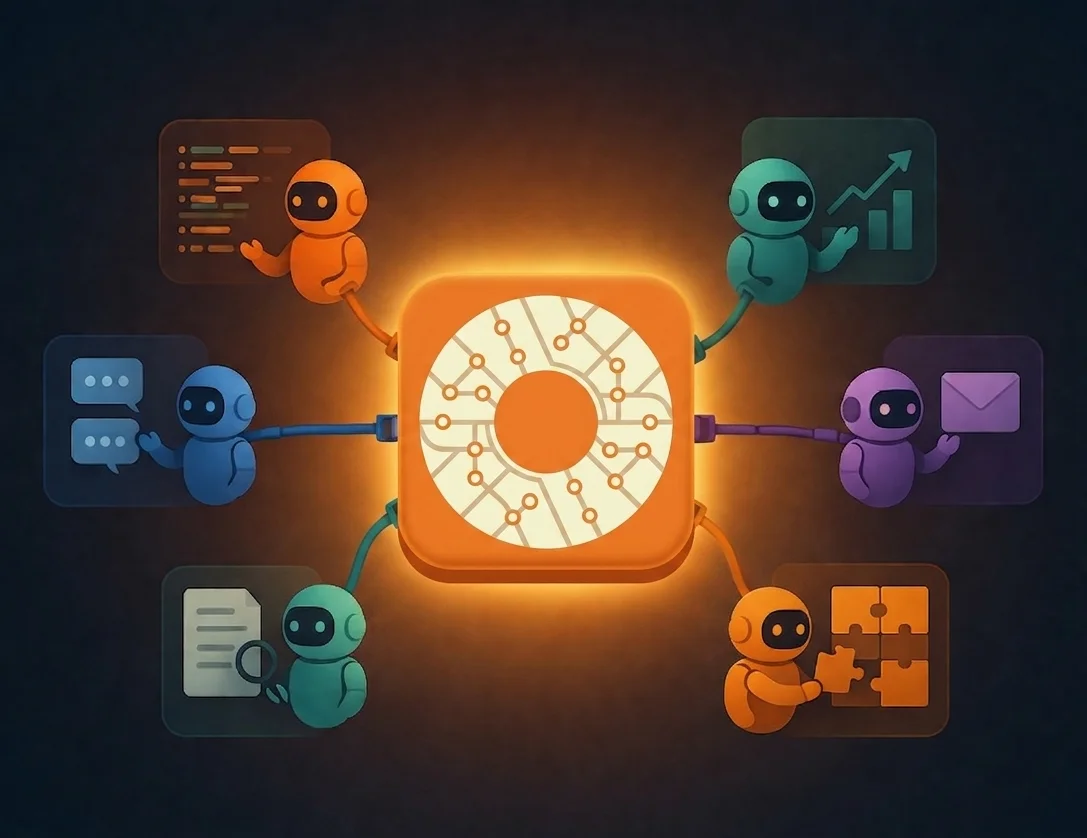

Orcheo and Harness Engineering: Where It Fits and Where It Can Grow Next

A grounded look at how the Orcheo ecosystem relates to harness engineering: where Orcheo already reduces agent failure modes through structure, observability, and guardrails, and where stronger verification and behavioural checks are next on the roadmap.